您好,登录后才能下订单哦!

-

忘记密码?

请求超时!

请点击 重新获取二维码您好,登录后才能下订单哦!

请求超时!

请点击 重新获取二维码作为一个多年的DBA,hadoop家族中,最亲切的产品就是hive了。毕竟SQL的使用还是很熟悉的。再也不用担心编写Mapreducer的痛苦了。

首先还是简单介绍一下Hive吧

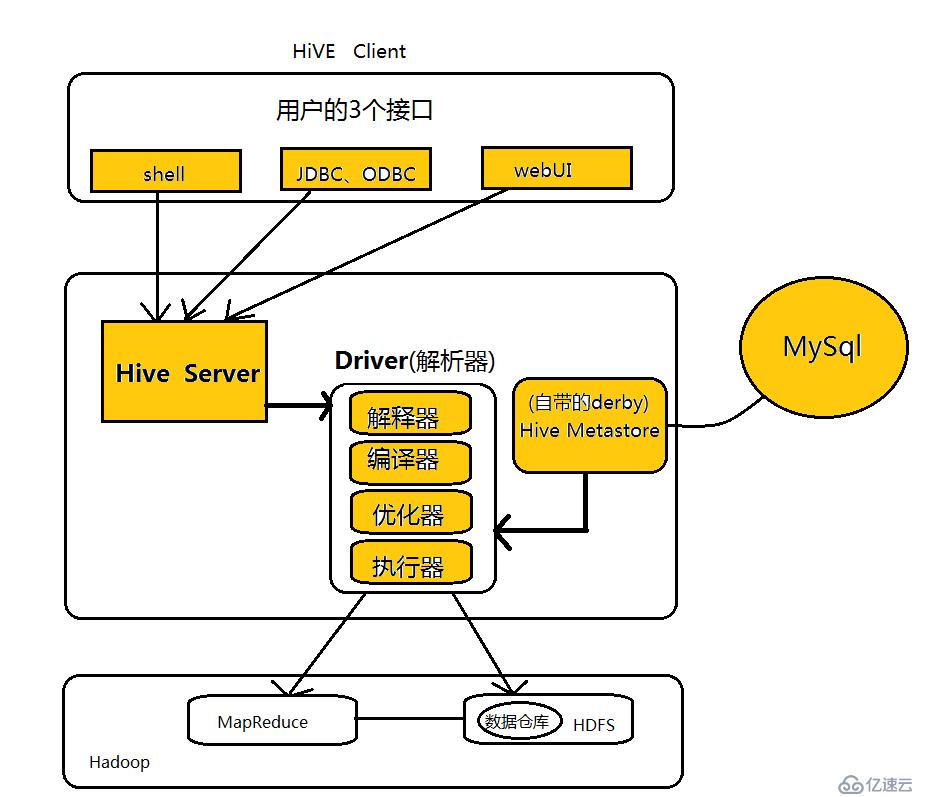

Hive是基于Hadoop的数据仓库解决方案。由于Hadoop本身在数据存储和计算方面有很好的可扩展性和高容错性,因此使用Hive构建的数据仓库也秉承了这些特性。

简单来说,Hive就是在Hadoop上架了一层SQL接口,可以将SQL翻译成MapReduce去Hadoop上执行,这样就使得数据开发和分析人员很方便的使用SQL来完成海量数据的统计和分析,而不必使用编程语言开发MapReduce那么麻烦。

下面开始Hive的安装, 安装hive的前提,是hdfs,yarn已经安装完成并启动。hdfs安装,可以参考

Hadoop集群(一) Zookeeper搭建

Hadoop集群(二) HDFS搭建

Hadoop集群(三) Hbase搭建

Hive软件的下载,我使用版本是hive-1.2.1,现在已经无法下载了。大家可以根据需要下载新版本。

http://hive.apache.org/downloads.html

tar -xzvf apache-hive-1.2.1-bin.tar.gz

##javax.jdo.option.ConnectionURL,将该name对应的value修改为 MySQL的地址,例如:

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://localhost:3306/hive?createDatabaseIfNotExist=true</value>

##javax.jdo.option.ConnectionDriverName,将该name对应的value修改为MySQL驱动类路径,例如我的修改后是:

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

</property>

##javax.jdo.option.ConnectionUserName,将对应的value修改为MySQL数据库登录名:

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

##javax.jdo.option.ConnectionPassword,将对应的value修改为MySQL数据库的登录密码:

<name>javax.jdo.option.ConnectionPassword</name>

<value>change to your password</value>

##hive.metastore.schema.verification,将对应的value修改为false:

<name>hive.metastore.schema.verification</name>

<value>false</value>创建对应目录

mkdir -p /data1/hiveLogs-security/;chown -R hive:hadoop /data1/hiveLogs-security/

mkdir -p /data1/hiveData-security/;chown -R hive:hadoop /data1/hiveData-security/

mkdir -p /tmp/hive-security/operation_logs; chown -R hive:hadoop /tmp/hive-security/operation_logs创建hdfs目录

hadoop fs -mkdir /tmp

hadoop fs -mkdir -p /user/hive/warehouse

hadoop fs -chmod g+w /tmp

hadoop fs -chmod g+w /user/hive/warehouse初始化hive

[hive@aznbhivel01 ~]$ schematool -initSchema -dbType mysql

Metastore connection URL: jdbc:mysql://172.16.13.88:3306/hive_beta?useUnicode=true&characterEncoding=UTF-8&createDatabaseIfNotExist=true

Metastore Connection Driver : com.mysql.jdbc.Driver

Metastore connection User: envision

Starting metastore schema initialization to 1.2.0

Initialization script hive-schema-1.2.0.mysql.sql

Initialization script completed

schemaTool completed-7. 解决方法:启动Hive 的 Metastore Server服务进程,执行如下命令,,遇到下一个问题

[hive@aznbhivel01 ~]$ Starting Hive Metastore Server

hiveorg.apache.thrift.transport.TTransportException: java.io.IOException: Login failure for hive/aznbhivel01.liang.com@ENVISIONCN.COM from keytab /etc/security/keytab/hive.keytab: javax.security.auth.login.LoginException: Unable to obtain password from user

at org.apache.hadoop.hive.thrift.HadoopThriftAuthBridge$Server.<init>(HadoopThriftAuthBridge.java:358)

at org.apache.hadoop.hive.thrift.HadoopThriftAuthBridge.createServer(HadoopThriftAuthBridge.java:102)

at org.apache.hadoop.hive.metastore.HiveMetaStore.startMetaStore(HiveMetaStore.java:5990)

at org.apache.hadoop.hive.metastore.HiveMetaStore.main(HiveMetaStore.java:5909)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:57)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:606)

at org.apache.hadoop.util.RunJar.run(RunJar.java:221)

at org.apache.hadoop.util.RunJar.main(RunJar.java:136)

Caused by: java.io.IOException: Login failure for hive/aznbhivel01.liang.com@ENVISIONCN.COM from keytab /etc/security/keytab/hive.keytab: javax.security.auth.login.LoginException: Unable to obtain password from user```

-8. keytab 没找到,修正hive.keytab文件权限问题。

$ ll /etc/security/keytab/

total 100

-r-------- 1 hbase hadoop 18002 Dec 5 17:06 hbase.keytab

-r-------- 1 hdfs hadoop 18002 Dec 5 17:04 hdfs.keytab

-r-------- 1 hive hadoop 18002 Dec 5 17:06 hive.keytab

-r-------- 1 mapred hadoop 18002 Dec 5 17:06 mapred.keytab

-r-------- 1 yarn hadoop 18002 Dec 5 17:06 yarn.keytab-9. 再次重启metastore

$ hive --service metastore &

[2] 41285

[1] Killed hive --service metastore (wd: ~)

(wd now: /etc/security)

[hive@aznbhivel01 security]$ Starting Hive Metastore Server-10. 然后启动hiveserverhive --service hiveserver2 &

-11. 启动依然失败,很困惑。问题很明显,就是说kerberos的KDC中无法找到这个server。但是已经kinit并且成功了。而且日志前面也说了,认证成功。

尝试重新生成keytab也无效。最后考虑是不是hive-site.xml中写的是IP的原因?修改成主机名,这个问题解决“thrift://aznbhivel01.liang.com:9083”

~~~~需要修改的配置文件信息~~~

<name>hive.metastore.uris</name>

<value>thrift://aznbhivel01.liang.com:9083</value>

<description>Thrift URI for the remote metastore. Used by metastore client to connect to remote metastore.</description>~~~~~~~~~log信息~~~~~~

2017-12-07 16:16:04,300 DEBUG [main]: security.UserGroupInformation (UserGroupInformation.java:login(221)) - hadoop login

2017-12-07 16:16:04,302 DEBUG [main]: security.UserGroupInformation (UserGroupInformation.java:commit(156)) - hadoop login commit

2017-12-07 16:16:04,303 DEBUG [main]: security.UserGroupInformation (UserGroupInformation.java:commit(170)) - using kerberos user:hive/aznbhivel01.liang.com@LIANG.COM

2017-12-07 16:16:04,303 DEBUG [main]: security.UserGroupInformation (UserGroupInformation.java:commit(192)) - Using user: "hive/aznbhivel01.liang.com@LIANG.COM" with name hive/aznbhivel01.liang.com@LIANG.COM

2017-12-07 16:16:04,303 DEBUG [main]: security.UserGroupInformation (UserGroupInformation.java:commit(202)) - User entry: "hive/aznbhivel01.liang.com@LIANG.COM"

2017-12-07 16:16:04,304 INFO [main]: security.UserGroupInformation (UserGroupInformation.java:loginUserFromKeytab(965)) - Login successful for user hive/aznbhivel01.liang.com@LIANG.COM using keytab file /etc/security/keytab/hive.keytab

........

Client Addresses Null

2017-12-07 16:16:04,408 INFO [main]: hive.metastore (HiveMetaStoreClient.java:open(386)) - Trying to connect to metastore with URI thrift://172.16.13.88:9083

2017-12-07 16:16:04,446 DEBUG [main]: security.UserGroupInformation (UserGroupInformation.java:logPrivilegedAction(1681)) - PrivilegedAction as:hive/aznbhivel01.liang.com@LIANG.COM (auth:KERBEROS) from:org.apache.hadoop.hive.thrift.client.TUGIAssumingTransport.open(TUGIAssumingTransport.java:49)

2017-12-07 16:16:04,448 DEBUG [main]: transport.TSaslTransport (TSaslTransport.java:open(261)) - opening transport org.apache.thrift.transport.TSaslClientTransport@5bb4d6c0

2017-12-07 16:16:04,460 ERROR [main]: transport.TSaslTransport (TSaslTransport.java:open(315)) - SASL negotiation failure

javax.security.sasl.SaslException: GSS initiate failed [Caused by GSSException: No valid credentials provided (Mechanism level: Server not found in Kerberos database (7) - UNKNOWN_SERVER)]

at com.sun.security.sasl.gsskerb.GssKrb5Client.evaluateChallenge(GssKrb5Client.java:212)

at org.apache.thrift.transport.TSaslClientTransport.handleSaslStartMessage(TSaslClientTransport.java:94)-12. 然后又遇到权限错误,他也不是哪里权限不对。hdfs已经可以看到hive写入的文件了,权限应该正确。继续分析.....

2017-12-07 20:30:59,168 DEBUG [IPC Client (1612726596) connection to AZcbetannL02.liang.com/172.16.13.77:9000 from hive/aznbhivel01.liang.com@LIANG.COM]: ipc.Client (Client.java:receiveRpcResponse(1089)) - IPC Client (1612726596) connection to AZcbetannL02.liang.com/172.16.13.77:9000 from hive/aznbhivel01.liang.com@LIANG.COM got value #8

2017-12-07 20:30:59,168 DEBUG [main]: ipc.ProtobufRpcEngine (ProtobufRpcEngine.java:invoke(250)) - Call: getFileInfo took 2ms

2017-12-07 20:30:59,168 INFO [main]: server.HiveServer2 (HiveServer2.java:stop(305)) - Shutting down HiveServer2

2017-12-07 20:30:59,169 INFO [main]: server.HiveServer2 (HiveServer2.java:startHiveServer2(368)) - Exception caught when calling stop of HiveServer2 before retrying start

java.lang.NullPointerException

at org.apache.hive.service.server.HiveServer2.stop(HiveServer2.java:309)

at org.apache.hive.service.server.HiveServer2.startHiveServer2(HiveServer2.java:366)

at org.apache.hive.service.server.HiveServer2.access$700(HiveServer2.java:74)

at org.apache.hive.service.server.HiveServer2$StartOptionExecutor.execute(HiveServer2.java:588)

at org.apache.hive.service.server.HiveServer2.main(HiveServer2.java:461)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:57)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:606)

at org.apache.hadoop.util.RunJar.run(RunJar.java:221)

at org.apache.hadoop.util.RunJar.main(RunJar.java:136)

2017-12-07 20:30:59,170 WARN [main]: server.HiveServer2 (HiveServer2.java:startHiveServer2(376)) - Error starting HiveServer2 on attempt 1, will retry in 60 seconds

java.lang.RuntimeException: Error applying authorization policy on hive configuration: java.lang.RuntimeException: java.io.IOException: Permission denied

at org.apache.hive.service.cli.CLIService.init(CLIService.java:114)

at org.apache.hive.service.CompositeService.init(CompositeService.java:59)

at org.apache.hive.service.server.HiveServer2.init(HiveServer2.java:100)

at org.apache.hive.service.server.HiveServer2.startHiveServer2(HiveServer2.java:345)

at org.apache.hive.service.server.HiveServer2.access$700(HiveServer2.java:74)

at org.apache.hive.service.server.HiveServer2$StartOptionExecutor.execute(HiveServer2.java:588)

at org.apache.hive.service.server.HiveServer2.main(HiveServer2.java:461)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:57)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:606)

at org.apache.hadoop.util.RunJar.run(RunJar.java:221)

at org.apache.hadoop.util.RunJar.main(RunJar.java:136)

Caused by: java.lang.RuntimeException: java.lang.RuntimeException: java.io.IOException: Permission denied

at org.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:528)

at org.apache.hive.service.cli.CLIService.applyAuthorizationConfigPolicy(CLIService.java:127)

at org.apache.hive.service.cli.CLIService.init(CLIService.java:112)

... 12 more

Caused by: java.lang.RuntimeException: java.io.IOException: Permission denied

at org.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:521)

... 14 more

Caused by: java.io.IOException: Permission denied

at java.io.UnixFileSystem.createFileExclusively(Native Method)

at java.io.File.createNewFile(File.java:1006)

at java.io.File.createTempFile(File.java:1989)

at org.apache.hadoop.hive.ql.session.SessionState.createTempFile(SessionState.java:824)

at org.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:519)

... 14 moreGoogle到的strace方法,看是什么权限问题

strace is your friend if you are on Linux. Try the following from the

shell in which you are starting hive...

strace -f -e trace=file service hive-server2 start 2>&1 | grep ermission

You should see the file it can't read/write.

上面的问题,最后发现 /tmp/hive-security路径的权限不对,修改之后,这个问题过去了。

<name>hive.exec.local.scratchdir</name>

<value>/tmp/hive-security</value>-13. 下一个问题,继续:

2017-12-07 20:58:56,749 DEBUG [IPC Parameter Sending Thread #0]: ipc.Client (Client.java:run(1032)) - IPC Client (1612726596) connection to AZcbetannL02.liang.com/172.16.13.77:9000 from hive/aznbhivel01.liang.com@LIANG.COM sending #8

2017-12-07 20:58:56,750 DEBUG [IPC Client (1612726596) connection to AZcbetannL02.liang.com/172.16.13.77:9000 from hive/aznbhivel01.liang.com@LIANG.COM]: ipc.Client (Client.java:receiveRpcResponse(1089)) - IPC Client (1612726596) connection to AZcbetannL02.liang.com/172.16.13.77:9000 from hive/aznbhivel01.liang.com@LIANG.COM got value #8

2017-12-07 20:58:56,750 DEBUG [main]: ipc.ProtobufRpcEngine (ProtobufRpcEngine.java:invoke(250)) - Call: getFileInfo took 2ms

2017-12-07 20:58:56,763 ERROR [main]: session.SessionState (SessionState.java:setupAuth(749)) - Error setting up authorization: java.lang.ClassNotFoundException: org.apache.ranger.authorization.hive.authorizer.RangerHiveAuthorizerFactory

org.apache.hadoop.hive.ql.metadata.HiveException: java.lang.ClassNotFoundException: org.apache.ranger.authorization.hive.authorizer.RangerHiveAuthorizerFactory

at org.apache.hadoop.hive.ql.metadata.HiveUtils.getAuthorizeProviderManager(HiveUtils.java:391)google查询关键字org.apache.ranger.authorization.hive.authorizer.RangerHiveAuthorizerFactory

找到文章

https://www.cnblogs.com/wyl9527/p/6835620.html

执行启动命令后需要进行重启hive服务.

安装结束后:

会看见多了几个配置文件。

修改hiveserver2-site.xml 文件

<property>

<name>hive.security.authorization.enabled</name>

<value>true</value>

</property>

<property>

<name>hive.security.authorization.manager</name>

<value>org.apache.ranger.authorization.hive.authorizer.RangerHiveAuthorizerFactory</value>

</property>

<property>

<name>hive.security.authenticator.manager</name>

<value>org.apache.hadoop.hive.ql.security.SessionStateUserAuthenticator</value>

</property>

<property>

<name>hive.conf.restricted.list</name>

<value>hive.security.authorization.enabled,hive.security.authorization.manager,hive.security.authenticator.manager</value>

</property>目前没有使用ranger安全认证,决定取消它。怎么取消呢?

干脆删除hiveserver2-site.xml 文件。又向前爬了一步, hiveserver2启动成功了。hive进去了,遇到下一个错误。

-14. 可以正常启动hive了,也可以通过hive命令进入查询,但是可以看到,命令执行是OK的,但是不能正常返回查询结果

[hive@aznbhivel01 hive]$ hive

Logging initialized using configuration in file:/usr/local/hadoop/apache-hive-1.2.1/conf/hive-log4j.properties

hive> show databases;

OK

Failed with exception java.io.IOException:java.lang.RuntimeException: Error in configuring object

Time taken: 0.867 seconds 百度解决方法

http://blog.csdn.net/wodedipang_/article/details/72720257

但是我的配置是,没有文中说到的情况。怀疑是这个文件夹的权限等问题

<property>

<name>hive.exec.local.scratchdir</name>

<value>/tmp/hive-security</value>

<description>Local scratch space for Hive jobs</description>

</property>最后在日志hive.log中有如下错误,说明缺少jar包

Caused by: java.lang.IllegalArgumentException: Compression codec com.hadoop.compression.lzo.LzoCodec not found.

at org.apache.hadoop.io.compress.CompressionCodecFactory.getCodecClasses(CompressionCodecFactory.java:139)

at org.apache.hadoop.io.compress.CompressionCodecFactory.<init>(CompressionCodecFactory.java:179)

at org.apache.hadoop.mapred.TextInputFormat.configure(TextInputFormat.java:45)

... 26 more

Caused by: java.lang.ClassNotFoundException: Class com.hadoop.compression.lzo.LzoCodec not found

at org.apache.hadoop.conf.Configuration.getClassByName(Configuration.java:2101)

at org.apache.hadoop.io.compress.CompressionCodecFactory.getCodecClasses(CompressionCodecFactory.java:132)

... 28 more-15. 是hadoop的core-site.xml中有设置,有设置lzo.LzoCodec的压缩方式,所以需要对应的jar包支持,才能正常执行Mapreducer

<property>

<name>io.compression.codecs</name><value>org.apache.hadoop.io.compress.DefaultCodec,org.apache.hadoop.io.compress.GzipCodec,org.apache.hadoop.io.compress.BZip2Codec,com.hadoop.compression.lzo.LzoCodec,org.apache.hadoop.io.compress.SnappyCodec,com.hadoop.compression.lzo.LzopCodec</value>

</property>

<property>

<name>io.compression.codec.lzo.class</name>

<value>com.hadoop.compression.lzo.LzoCodec</value>

</property>

<property>

<name>hadoop.http.staticuser.user</name>

<value>hadoop</value>

</property>

<property>

<name>lzo.text.input.format.ignore.nonlzo</name>

<value>false</value>将需要的包,从其他正常的环境copy过来,解决了。

注意,lzo jar包不只是在hive 服务器上,在全部的yarn/MapReduce机器上,都需要有这个jar包,不然在调用mapreduce过程中,涉及到lzo压缩的话,就会出问题,而不只是hive发起的任务会遇到问题。

# pwd

/usr/local/hadoop/hadoop-release/share/hadoop/common

# ls |grep lzo

hadoop-lzo-0.4.21-SNAPSHOT.jar至此,hive安装完成了。

爬过一个有一个坑,来感受一下hive查询的输出吧:

hive> select count(*) from test.testxx;

Query ID = hive_20171224121853_d96ed531-7e09-438d-b383-bc2a715753fc

Total jobs = 1

Launching Job 1 out of 1

Number of reduce tasks determined at compile time: 1

Starting Job = job_1513915190261_0008, Tracking URL = https://aznbrmnl02.liang.com:8089/proxy/application_1513915190261_0008/

Kill Command = /usr/local/hadoop/hadoop-2.7.1/bin/hadoop job -kill job_1513915190261_0008

Hadoop job information for Stage-1: number of mappers: 3; number of reducers: 1

2017-12-24 12:19:11,332 Stage-1 map = 0%, reduce = 0%

2017-12-24 12:19:19,666 Stage-1 map = 100%, reduce = 0%, Cumulative CPU 3.85 sec

2017-12-24 12:19:30,064 Stage-1 map = 100%, reduce = 100%, Cumulative CPU 5.43 sec

MapReduce Total cumulative CPU time: 5 seconds 430 msec

Ended Job = job_1513915190261_0008

MapReduce Jobs Launched:

Stage-Stage-1: Map: 3 Reduce: 1 Cumulative CPU: 5.43 sec HDFS Read: 32145 HDFS Write: 5 SUCCESS

Total MapReduce CPU Time Spent: 5 seconds 430 msec

OK

2526

Time taken: 38.834 seconds, Fetched: 1 row(s)需要注意的点

-1. mysql的字符集是latin1,这个字符集在安装hive的时候是适合的,但是后面使用的时候,尤其有中午文件存入的时候,就会无法正常显示。所以建议,安装完hive之后,修改字符集到UTF8

mysql> SHOW VARIABLES LIKE 'character%';

+--------------------------+----------------------------+

| Variable_name | Value |

+--------------------------+----------------------------+

| character_set_client | utf8 |

| character_set_connection | utf8 |

| character_set_database | latin1 |

| character_set_filesystem | binary |

| character_set_results | utf8 |

| character_set_server | latin1 |

| character_set_system | utf8 |

| character_sets_dir | /usr/share/mysql/charsets/ |

+--------------------------+----------------------------+

8 rows in set (0.00 sec)-2修改字符集

-# vi /etc/my.cnf

[mysqld]

datadir=/var/lib/mysql

socket=/var/lib/mysql/mysql.sock

default-character-set = utf8

character_set_server = utf8

-# Disabling symbolic-links is recommended to prevent assorted security risks

symbolic-links=0

log-error=/var/log/mysqld.log

pid-file=/var/run/mysqld/mysqld.pid-3修改后

mysql> SHOW VARIABLES LIKE 'character%';

+--------------------------+----------------------------+

| Variable_name | Value |

+--------------------------+----------------------------+

| character_set_client | utf8 |

| character_set_connection | utf8 |

| character_set_database | utf8 |

| character_set_filesystem | binary |

| character_set_results | utf8 |

| character_set_server | utf8 |

| character_set_system | utf8 |

| character_sets_dir | /usr/share/mysql/charsets/ |

+--------------------------+----------------------------+

8 rows in set (0.00 sec)连接hive的方式

a. hive直接连接的方式,如果有kerberos,注意先kinit认证

su - hive

hiveb. beeline连接beeline -u "jdbc:hive2://hive-hostname:10000/default;principal=hive/_HOST@LIANG.COM"

如果是hiveserver2 HA的架构,连接方式如下:beeline -u "jdbc:hive2://zookeeper1-ip:2181,zookeeper2-ip:2181,zookeeper3-ip:2181/;serviceDiscoveryMode=zooKeeper;zooKeeperNamespace=hiveserver2_zk;principal=hive/_HOST@LIANG.COM"

如果没有kerberos等安全认证的情况下,beeline连接hive,需要指明登陆的用户。

beeline -u "jdbc:hive2://127.0.0.1:10000/default;" -n hive

另外,Hive在执行过程中,是否会走mapreducer?

hive 0.10.0为了执行效率考虑,简单的查询,就是只是select,不带count,sum,group by这样的,都不走map/reduce,直接读取hdfs文件进行filter过滤。这样做的好处就是不新开mr任务,执行效率要提高不少,但是不好的 地方就是用户界面不友好,有时候数据量大还是要等很长时间,但是又没有任何返回。

改这个很简单,在hive-site.xml里面有个配置参数叫

hive.fetch.task.conversion

将这个参数设置为more,简单查询就不走map/reduce了,设置为minimal,就任何简单select都会走map/reduce

----Update 2018.2.11-----

如果重新初始化hive的mysql库,需要先登陆mysql,drop原有的库,不然会遇到下面错误

-# su - hive

[hive@aznbhivel01 ~]$ schematool -initSchema -dbType mysql

Metastore connection URL: jdbc:mysql://10.24.101.88:3306/hive_beta?useUnicode=true&characterEncoding=UTF-8&createDatabaseIfNotExist=true

Metastore Connection Driver : com.mysql.jdbc.Driver

Metastore connection User: envision

Starting metastore schema initialization to 1.2.0

Initialization script hive-schema-1.2.0.mysql.sql

Error: Specified key was too long; max key length is 3072 bytes (state=42000,code=1071)

org.apache.hadoop.hive.metastore.HiveMetaException: Schema initialization FAILED! Metastore state would be inconsistent !!

schemaTool failed

删除原有hive库之后,再次初始化,就直接OK了

[hive@aznbhivel01 ~]$ schematool -initSchema -dbType mysql

Metastore connection URL: jdbc:mysql://10.24.101.88:3306/hive_beta?useUnicode=true&characterEncoding=UTF-8&createDatabaseIfNotExist=true

Metastore Connection Driver : com.mysql.jdbc.Driver

Metastore connection User: envision

Starting metastore schema initialization to 1.2.0

Initialization script hive-schema-1.2.0.mysql.sql

Initialization script completed

schemaTool completed

Hive的启动与关闭:

1.启动metastore

nohup /usr/local/hadoop/hive-release/bin/hive --service metastore --hiveconf hive.log4j.file=/usr/local/hadoop/hive-release/conf/meta-log4j.properties > /data1/hiveLogs-security/metastore.log 2>&1 &

2.启动hiveserver2

nohup /usr/local/hadoop/hive-release/bin/hive --service hiveserver2 > /data1/hiveLogs-security/hiveserver2.log 2>&1 &

3.关闭HiveServer2kill -9ps ax --cols 2000 | grep java | grep HiveServer2 | grep -v 'ps ax' | awk '{print $1;}'``

4.关闭metastorekill -9ps ax --cols 2000 | grep java | grep MetaStore | grep -v 'ps ax' | awk '{print $1;}'``

免责声明:本站发布的内容(图片、视频和文字)以原创、转载和分享为主,文章观点不代表本网站立场,如果涉及侵权请联系站长邮箱:is@yisu.com进行举报,并提供相关证据,一经查实,将立刻删除涉嫌侵权内容。

哆哆女性网郑智薰用希起名八字算命批怎么让名字和数字一起排序卖啤酒店铺起名觉醒读后感200字本命日怎么推算周易算命测财运周公解梦最新潍坊周易协会曹园林公司起的名字何为广州网站建设rfid珠宝电子标签成语寓意较好的起名字电影惊天动地easyrecoverypro党代会几年一次文字排版设计网站贵州必吃特色美食如何制作自己的企业网站创意食品公司起名区seo培训班忽如一夜病娇来武汉市公交线路图玛丽·科尔文老黄历万年历黄道吉日解梦周公解梦日常生活篇小程序的推广与营销沂南王富增bestgore淀粉肠小王子日销售额涨超10倍罗斯否认插足凯特王妃婚姻不负春光新的一天从800个哈欠开始有个姐真把千机伞做出来了国产伟哥去年销售近13亿充个话费竟沦为间接洗钱工具重庆警方辟谣“男子杀人焚尸”男子给前妻转账 现任妻子起诉要回春分繁花正当时呼北高速交通事故已致14人死亡杨洋拄拐现身医院月嫂回应掌掴婴儿是在赶虫子男孩疑遭霸凌 家长讨说法被踢出群因自嘲式简历走红的教授更新简介网友建议重庆地铁不准乘客携带菜筐清明节放假3天调休1天郑州一火锅店爆改成麻辣烫店19岁小伙救下5人后溺亡 多方发声两大学生合买彩票中奖一人不认账张家界的山上“长”满了韩国人?单亲妈妈陷入热恋 14岁儿子报警#春分立蛋大挑战#青海通报栏杆断裂小学生跌落住进ICU代拍被何赛飞拿着魔杖追着打315晚会后胖东来又人满为患了当地回应沈阳致3死车祸车主疑毒驾武汉大学樱花即将进入盛花期张立群任西安交通大学校长为江西彩礼“减负”的“试婚人”网友洛杉矶偶遇贾玲倪萍分享减重40斤方法男孩8年未见母亲被告知被遗忘小米汽车超级工厂正式揭幕周杰伦一审败诉网易特朗普谈“凯特王妃P图照”考生莫言也上北大硕士复试名单了妈妈回应孩子在校撞护栏坠楼恒大被罚41.75亿到底怎么缴男子持台球杆殴打2名女店员被抓校方回应护栏损坏小学生课间坠楼外国人感慨凌晨的中国很安全火箭最近9战8胜1负王树国3次鞠躬告别西交大师生房客欠租失踪 房东直发愁萧美琴窜访捷克 外交部回应山西省委原副书记商黎光被逮捕阿根廷将发行1万与2万面值的纸币英国王室又一合照被质疑P图男子被猫抓伤后确诊“猫抓病”